SFP+ Direct Attach Copper (10GSFP+Cu) is likely the cheapest way of setting up a 10 Gigabit Ethernet connection between your desktop and Unraid server. This article will cover the basics of Direct Attach Copper, the advantages and disadvantages of it, and when to use it. What won’t be covered is how to set up and configure a Direct Attach Copper PCIe to an Unraid server, as this article serves as an explainer. There will be further articles on the subject, but in an attempt to keep this one at an acceptable length, I couldn’t cover everything.

With media files getting bigger, hard drive capacities growing, and cache drives (i.e., SSDs) becoming more affordable, Unraid’s need for fast networking speed is becoming increasingly prevalent. While you could buy a motherboard with 2.5GBASE-T or even 5GBASE-T network interface controller (NIC) onboard, there aren’t too many consumer models that support 10 Gigabit Ethernet available.

Upgrading your Unraid server with a 10GBASE-T PCIe expansion card is a possibility, but will end up costing more than just the card, as you might need a new switch and expensive cables to achieve stable 10GbE speeds. All in all, such an upgrade could end up costing you hundreds. Direct Attach Copper might not be as well known as Ethernet and fibre optics using SFP+, but it might just be the answer to your prayers.

Learning the networking language

Before taking an in-depth look at Direct Attach Copper and its 10GbE capabilities for Unraid, it is vital to learn the networking lingo. As with just about every subject, there are a few terms we use wrongly in everyday life.

What you likely call an Ethernet cable, isn’t actually an Ethernet cable, but a twisted pair cable. With this list, I want to avoid shaming anyone, I admit to making the same mistakes. But to be able to understand the rest of the article, the definitions of certain words need to be understood.

- Ethernet: A family of wired computer networking technologies. In day to day language, we often refer to Ethernet cables, Ethernet ports, or Ethernet ports, all of which are most likely used in the wrong context. What we call an Ethernet cable (the one with what we call an RJ45 jack) is actually a twisted pair cable with an 8P8C modular connector.

- GbE: Gigabit Ethernet.

- 8P8C modular connector: This is what you will find on either end of a twisted pair cable. It is generally referred to as an RJ45 jack or connector. In fact, RJ45 originally referred to a specific wiring configuration of an 8P8C connector. As RJ45 is today used to describe the connector, I will also be doing so in this article. You can assume that I’m describing a twisted pair cable with an 8P8C modular connector whenever I mention a twisted pair cable.

- 10GBASE-T: Provides 10 Gbit/s connections over unshielded or shielded twisted pair cables, over distances up to 100 metres (ca. 328 ft). I will be using this description instead of “10 GbE twisted pair cable with an 8P8C modular connector”. In the same manner, 2.5GBASE-T and 5GBASE-T describe 2.5 GbE and 5 GbE over a twisted pair cable.

- 10GSFP+Cu: Provides 10 Gbit/s connections over Direct Attach Copper cables.

- Small form-factor pluggable (SFP) network interface module: Just like the 8P8C modular connector, this is a network interface. The advantage SFP+ has over the 8P8C modular connector is that modules can be added to ports, making them compatible with both copper and fibre optics. Below are two images of such modules, one with an RJ45 interface and the other with an LC interface.

- SFP+: Allows for speeds up to 10 Gbit/s, while SFP maxes out at 1 Gbit/s.

- Network interface controller (NIC): Hardware that connects to a network. For example, a PCIe card or an RJ45 port on a motherboard and its controller.

Why not use twisted pair cable with an RJ45 connector for 10GbE on Unraid?

Generally speaking, there are two different routes you can go down when upgrading you Unraid server to 10GbE: You either buy a 10GbE card with an RJ45 connector for a twisted pair cable (10GBASE-T), or one with an SFP+ interface.

Your first thought might well be to stick with this type of cabling because that is what you have been using so far. In that case, you would be buying a card that supports. However, there are some major drawbacks to using RJ45:

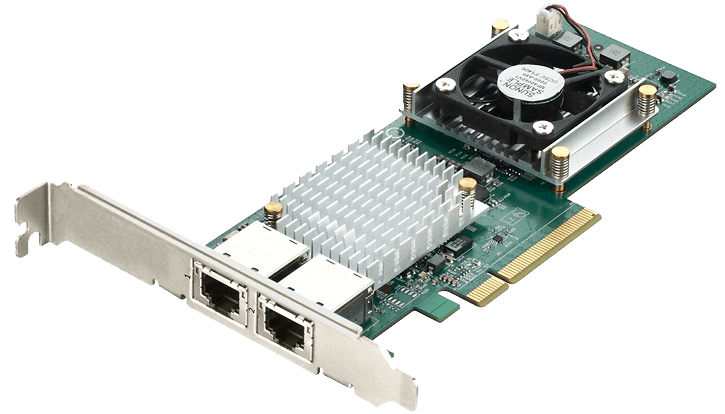

- While cheap 10GBASE-T NICs are starting to appear, actually good 10GBASE-T NICs are pricey. For example, the ASUS XG-C100C costs ~US$99, but reports seem to indicate that it suffers from thermal throttling.

- Controllers of 10GBASE-T NICs produce a lot of heat, and thus 10GBASE-T capable switches frequently need active cooling and are loud. 10GBASE-T PCIe cards with insufficient cooling can throttle under load. If your Unraid server lives in a server case with powerful fans, this shouldn’t be an issue. But it can be an issue in desktop cases, especially if the NIC is next to another heat producing component, such as a graphics card. SFP+ requires approximately 0.7W, whereas a 10GBASE-T component requires between 2W to 5W per port.

- Direct Attach Copper doesn’t need an adapter module for an SFP+ interface, but you can buy 10GbE modules for twisted pair cables and optical fiber. This makes the SFP+ interface much more flexible for switches.

Another major downside of 10GBASE-T is its latency. Direct Attach Copper offers 15 to 25 times lower transceiver latency. This might not have much of an influence on your home server running Unraid, but it will do in large server clusters. Why do I mention this? Because you can often buy old hardware from company servers for cheap on the second-hand market, including networking cards and switches.

Despite everything mentioned, there are obviously situations in which twisted pair cable still comes out on top. If your home has in-wall wiring, the choice has basically been mode for you. If your cable runs are more than seven meters long, you will need to opt for twisted pair or fiber optic cable (more on that in just a bit).

What about fiber optic cables?

The main advantage fiber optic cables have over copper cables is speed over distance. Multi-mode fiber can carry a 10 Gbps up to 300 m and single-mode fiber up to 10 km. For comparison, a CAT7 twisted pair cable (the expensive stuff) supports 10Gbps speeds up to 100 meters.

When connecting devices, for example two switches, on different floors of your home with 10 GbE, it might make sense to use fiber optic cables. Besides the aforementioned speed over distance, fiber optic cables will not experience interference from any other cables or devices, and connections do not require as much power as twisted pair. Just like Direct Attach Copper, fiber optic cables connect to an SFP interface, but the do require a module. When calculating the price, you always need to consider that a fiber optic cable requires two such modules. One for either end.

Besides cost, which I will be detailing later, the downside of using fiber optic cables is that they are extremely finicky. If you were to touch the end of such a cable with a slightly greasy finger, it could spell disaster. Even specs of dust can influence the connection’s stability. This Linus Tech Tips video shows, just how convoluted the process of cabling with fiber optics is. We’re not even going to go in to the subject of splicing fiber optics cables and all the expensive equipment needed for that.

Fragility is another thing to take in to consideration. If you were to bend a fiber optic cable too much, you might destroy it. Fiber optic cables are fantastic for networking, I just wouldn’t trust myself with doing everything correctly when setting it up.

What is Direct Attach Copper?

Now that we know not to use 10GBASE-T NICs for 10 GbE networking, it’s time to take a closer look at Direct Attach Copper and its advantages, as well its disadvantages. Direct Attach Copper is a twinax copper cable that connects to an SFP+ interface without the need for any additional modules. In the home setting, the biggest advantage of Direct Attach Copper will be cost.

It’s not just the NIC that come in cheaper, but also, if you need one, the switch. Generally speaking, a 10GBASE-T switch will require more powerful components, bigger heat sinks, beefier power supplies, and sometimes even fans. Those costs all add up and as a consequence, 10GBASE-T switches, if bought new, can be considerably more expensive than SFP+ switches. If you are in the market for a 10GbE switch with SFP+ interfaces, reviewers and the community regard MikroTik as a provider of solid but cheap hardware.

So far, it sounds as if Direct Attach Copper is the perfect solution when looking to upgrade an Unraid server to 10GbE. Sadly, that isn’t the case. There is one major downside of Direct Attach Copper: the cable’s maximum length is seven meters. This limitation restricts Direct Attach Copper’s use to a couple of scenarios:

- Your Unraid server and workstation are nearby, and you want a fast connection between the two. In this case, you wouldn’t need a switch, and would just add an SFP+ PCIe card to each machine.

- You have a networking or server rack, in which, or close to, your Unraid server resides. In this case, you would connect the Unraid server and switch using a Direct Attach Copper cable, and use adapter modules for your workstation. Other devices, such as TVs and printers, don’t require 10GbE and can be kept on a 1GbE network.

- If multiple computers are using the Unraid server, you could also use 10GbE only for the latter. That way, your multiple computers will have more bandwidth to share. If possible, up to ten workstations would be able to fully saturate their network connection when connecting to the Unraid server.

Buying the right 10GbE NIC for Unraid

There are few things where everyone comes to a unanimous decision. However, such a rare scenario is to be found when it comes to recommending a 10 GbE PCIe network interface controller (NIC) for Unraid. Everyone agrees that the Mellanox ConnectX-2 is the way to go. This isn’t the newest card, but it is still capable of everything you might want in a home server. If you can find the newer Mellanox ConnectX-3 for less, it will certainly not be a bad purchase, but the ConnectX-2 will offer everything you need.

A kit consisting of two Mellanox ConnectX-2 cards and a matching Direct Attach Copper cable will cost you no more than US$100 on the second-hand market. If you are patient, you might find such a kit for up to half the price. Your eyes are definitely not deceiving you, upgrading to 10GbE with Direct Attach Copper will cost you less than one of the cheapest 10GbE RJ45 NIC.

Further reading/viewing on Direct Attach Copper and Unraid

None other than Spaceinvader One, the Youtuber and Unraid guru, covered this topic. In his video, you can learn how to set up an SFP+ network card and configure it in Unraid.